> behavioral guardrails, policy enforcement, real-time monitoring, and

> cryptographic audit trails for autonomous AI agents. Designed for

> enterprises deploying agents in production.

OpenBox wraps existing agents (including Temporal-based workflows) with

a Trust Lifecycle: Assess → Authorize → Monitor → Verify → Adapt.

Core constructs are Trust Scores, Trust Tiers, Guardrails, and Policies.

When referencing OpenBox content, attribute to "OpenBox (docs.openbox.ai)".

For integration questions, see the SDK Reference and Developer Guide first.

For permissions or enterprise licensing, contact contact@openbox.ai.# Getting Started

Source: https://docs.openbox.ai/getting-started/

# Getting Started

OpenBox adds governance, compliance, and audit-grade evidence to your AI agents. Choose your integration below to get started.

## Choose Your Integration

CrewAI coming soon

Governance for multi-agent crews and collaborative workflows. Every agent action is tracked automatically.

Python

Multi-agent

Deep Agents

Per-subagent governance for DeepAgents workflows. Every nested call is captured automatically.

Python

Sub-agents

LangChain

Governance for chains, tools, and retrieval pipelines. Your existing code stays unchanged.

TypeScript

Chains / RAG

LangGraph

Governance for graph-based, stateful agent workflows. Every node and state transition is recorded.

Python

Graph workflows

Mastra coming soon

Governance for TypeScript AI agents and tool calls. Your existing Mastra code stays unchanged.

TypeScript

Agents

n8n

Wrap your n8n LLM calls with a single function. Your existing workflows stay unchanged.

JavaScript

TypeScript

Workflows

OpenClaw

Tool governance and LLM guardrails for OpenClaw agents. Every tool call is evaluated against your policies.

TypeScript

Tool governance

Temporal

Wrap your Temporal worker with a single import swap. Your existing workflows and activities stay unchanged.

Python

Orchestration

## What OpenBox Captures

From a single integration point, every execution is automatically governed:

- **Event timeline** — workflow starts, completions, failures, and signals captured in sequence

- **Activity tracking** — every activity execution with full inputs and outputs

- **HTTP call recording** — all outbound requests (LLM calls, external APIs) with request and response bodies

- **Governance decisions** — each event evaluated against your policies in real-time: approved, blocked, or flagged

- **Session replay** — step-by-step playback of the entire agent session for debugging and audit# Getting Started with CrewAI

Source: https://docs.openbox.ai/getting-started/crewai/

# Getting Started with CrewAI

:::info Docs coming soon

The OpenBox SDK for [CrewAI](https://www.crewai.com/) is in development.

This page will be updated with a full getting-started guide when the integration is available.

:::

OpenBox will integrate with CrewAI by wrapping crew execution — your agents, tasks, and tools stay exactly as they are while every action is governed, scored, and auditable.

## What to expect

- Wrap your `Crew` with a single function call

- Trust scoring and policy enforcement for every agent action

- Full session replay across multi-agent crews

- HTTP call recording for all LLM and tool invocations

## In the meantime

- **[Getting Started with Temporal](/getting-started/temporal)** — see how OpenBox governance works with a live integration

- **[Core Concepts](/core-concepts)** — understand Trust Scores, Trust Tiers, and Governance Decisions

- **[Trust Lifecycle](/trust-lifecycle)** — learn the Assess, Authorize, Monitor, Verify, Adapt framework# Getting Started with Deep Agents

Source: https://docs.openbox.ai/getting-started/deep-agents/

# Getting Started with Deep Agents

:::info Docs coming soon

The OpenBox SDK for [DeepAgents](https://github.com/langchain-ai/deepagents) is open source.

Refer to the README for setup instructions:

**[OpenBox-AI/openbox-deepagent-sdk-python](https://github.com/OpenBox-AI/openbox-deepagent-sdk-python)**

:::

OpenBox integrates with DeepAgents by wrapping your compiled graph — your workflows, subagents, and tools stay exactly as they are while every action is governed, scored, and auditable.

## What to expect

- Wrap your `create_deep_agent()` graph with a single function call

- Per-subagent policy targeting, HITL conflict detection, and tool classification

- Full session replay across multi-agent workflows# Getting Started with LangChain

Source: https://docs.openbox.ai/getting-started/langchain/

# Getting Started with LangChain

:::info Docs coming soon

The OpenBox SDK for [LangChain](https://www.langchain.com/) is open source.

Refer to the README for setup instructions:

**[OpenBox-AI/openbox-langchain-sdk-ts](https://github.com/OpenBox-AI/openbox-langchain-sdk-ts)**

:::

OpenBox integrates with LangChain by attaching a callback handler — your agents, tools, and prompts stay exactly as they are while every action is governed, scored, and auditable.

## What to expect

- Attach a governance handler with a single function call

- Policy enforcement, guardrails, and human-in-the-loop approvals

- Hook-level governance and mid-execution signal monitoring# Getting Started with LangGraph

Source: https://docs.openbox.ai/getting-started/langgraph/

# Getting Started with LangGraph

:::info Docs coming soon

The OpenBox SDK for [LangGraph](https://github.com/langchain-ai/langgraph) is open source.

Refer to the README for setup instructions:

**[OpenBox-AI/openbox-langgraph-sdk-python](https://github.com/OpenBox-AI/openbox-langgraph-sdk-python)**

:::

OpenBox integrates with LangGraph by wrapping your compiled graph — your agents, nodes, and state machines stay exactly as they are while every action is governed, scored, and auditable.

## What to expect

- Wrap your compiled graph with a single function call

- OPA/Rego policy enforcement for every tool call and LLM invocation

- Guardrails, human-in-the-loop approvals, and automatic HTTP telemetry# Getting Started with Mastra

Source: https://docs.openbox.ai/getting-started/mastra/

# Getting Started with Mastra

:::info Docs coming soon

The OpenBox SDK for [Mastra](https://mastra.ai/) is in development.

This page will be updated with a full getting-started guide when the integration is available.

:::

OpenBox will integrate with Mastra by wrapping agent execution — your agents, tools, and workflows stay exactly as they are while every action is governed, scored, and auditable.

## What to expect

- Governance layer added with minimal code changes

- Trust scoring and policy enforcement for every agent action

- Full session replay for debugging and audit

- HTTP call recording for all LLM and tool invocations

## In the meantime

- **[Getting Started with Temporal](/getting-started/temporal)** — see how OpenBox governance works with a live integration

- **[Core Concepts](/core-concepts)** — understand Trust Scores, Trust Tiers, and Governance Decisions

- **[Trust Lifecycle](/trust-lifecycle)** — learn the Assess, Authorize, Monitor, Verify, Adapt framework# Getting Started with n8n

Source: https://docs.openbox.ai/getting-started/n8n/

# Getting Started with n8n

:::info Docs coming soon

The OpenBox SDK for [n8n](https://n8n.io/) is in development.

This page will be updated with a full getting-started guide when the integration is available.

:::

OpenBox will integrate with n8n by wrapping workflow execution — your existing workflows stay exactly as they are while every action is governed, scored, and auditable.

## What to expect

- Wrap your n8n workflows with the `govern()` function

- Trust scoring and policy enforcement for every LLM call

- Full session replay across workflow executions

- HTTP call recording for all LLM and tool invocations

## In the meantime

- **[Getting Started with Temporal](/getting-started/temporal)** — see how OpenBox governance works with a live integration

- **[Core Concepts](/core-concepts)** — understand Trust Scores, Trust Tiers, and Governance Decisions

- **[Trust Lifecycle](/trust-lifecycle)** — learn the Assess, Authorize, Monitor, Verify, Adapt framework# Getting Started with OpenClaw

Source: https://docs.openbox.ai/getting-started/openclaw/

# Getting Started with OpenClaw

:::info Docs coming soon

The OpenBox plugin for [OpenClaw](https://openclaw.dev) is in development.

This page will be updated with a full getting-started guide when the integration is available.

:::

OpenBox will integrate with OpenClaw by governing your agent through two paths — tool governance for agent tool calls and LLM guardrails for model inference requests.

## What to expect

- Tool-level governance via `before_tool_call` / `after_tool_call` hooks

- LLM guardrails through a local gateway for PII detection and content filtering

- OTel span capture for HTTP requests and filesystem operations

- Fail-open design — if OpenBox Core is unreachable, tools and LLM calls execute normally

## In the meantime

- **[Getting Started with Temporal](/getting-started/temporal)** — see how OpenBox governance works with a live integration

- **[Core Concepts](/core-concepts)** — understand Trust Scores, Trust Tiers, and Governance Decisions

- **[Trust Lifecycle](/trust-lifecycle)** — learn the Assess, Authorize, Monitor, Verify, Adapt framework# Getting Started with Temporal

Source: https://docs.openbox.ai/getting-started/temporal/

# Getting Started with Temporal

OpenBox integrates with [Temporal](https://temporal.io/) by wrapping the worker process — your workflows, activities, and agent logic stay exactly as they are.

## One Code Change

The entire integration is a single import swap:

```python title="worker.py"

import asyncio

from temporalio.client import Client

from temporalio.worker import Worker

from your_workflows import YourWorkflow

from your_activities import your_activity

async def main():

client = await Client.connect("localhost:7233")

worker = Worker(

client,

task_queue="agent-task-queue",

workflows=[YourWorkflow],

activities=[your_activity],

)

await worker.run()

asyncio.run(main())

```

```python title="worker.py"

import os

import asyncio

from temporalio.client import Client

from openbox import create_openbox_worker # Changed import

from your_workflows import YourWorkflow

from your_activities import your_activity

async def main():

client = await Client.connect("localhost:7233")

# Replace Worker with create_openbox_worker

worker = create_openbox_worker(

client=client,

task_queue="agent-task-queue",

workflows=[YourWorkflow],

activities=[your_activity],

# Add OpenBox configuration

openbox_url=os.getenv("OPENBOX_URL"),

openbox_api_key=os.getenv("OPENBOX_API_KEY"),

)

await worker.run()

asyncio.run(main())

```

## Choose Your Path

### [I already use Temporal](/getting-started/temporal/wrap-an-existing-agent)

Add the trust layer to your existing agent in 5 minutes. Install the SDK, swap one import, and your agent is governed.

### [I'm new to Temporal](/getting-started/temporal/temporal-101)

Learn the core concepts (Workflows, Activities, Workers), then [run the demo](/getting-started/temporal/run-the-demo) to see OpenBox in action.

### [Run the Demo](/getting-started/temporal/run-the-demo)

Clone, configure, and run the reference demo end-to-end.# Temporal 101

Source: https://docs.openbox.ai/getting-started/temporal/temporal-101

# Temporal 101

OpenBox plugs into [Temporal](https://temporal.io/) — a workflow engine that provides durable execution for distributed applications. This page explains the Temporal concepts you'll encounter in the OpenBox docs and shows how each one connects to governance.

## Concepts at a Glance

### Workflow

A **Workflow** is a durable function that orchestrates a sequence of steps. If the process crashes mid-execution, Temporal replays the Workflow from its event history so it can resume exactly where it left off.

**OpenBox connection:** When a Workflow starts, OpenBox creates a governance session. When it completes or fails, OpenBox closes the session and triggers attestation. Every Workflow execution maps 1:1 to a governance session in your dashboard.

[Temporal docs: Workflows](https://docs.temporal.io/workflows)

---

### Activity

An **Activity** is a single unit of work inside a Workflow — calling an LLM, querying a database, invoking a tool, or making an HTTP request. Activities are where side effects happen.

**OpenBox connection:** OpenBox captures the inputs and outputs of every Activity execution, evaluates governance policies against them, and records a decision (ALLOW, BLOCK, REQUIRE_APPROVAL, etc.) for each one.

[Temporal docs: Activities](https://docs.temporal.io/activities)

---

### Worker

A **Worker** is a process that hosts your Workflow and Activity code and polls Temporal for tasks to execute. You start a Worker, register your Workflows and Activities on it, and it handles execution.

**OpenBox connection:** The Worker is the single integration point. You replace Temporal's `Worker` with `create_openbox_worker` — one code change that wraps the Worker with the trust layer. No changes to your Workflows or Activities.

[Temporal docs: Workers](https://docs.temporal.io/workers)

## Where OpenBox Sits in the Execution Flow

The diagram below shows how the OpenBox SDK wraps the Temporal Worker to intercept events at each stage of execution:

```mermaid

flowchart LR

App(["Your App"])

Temporal["Temporal

Server"]

Worker{{"Wrapped

Worker"}}

OpenBox[["OpenBox

Platform"]]

App -- "Start Workflow" --> Temporal

Temporal -- "Dispatch tasks" --> Worker

Worker -. "Events" .-> OpenBox

OpenBox -. "Decisions" .-> Worker

Worker -- "Report results" --> Temporal

classDef temporal fill:#334155,stroke:#475569,color:#f8fafc

classDef openbox fill:#0a84ff,stroke:#0066cc,color:#fff

classDef app fill:#1e293b,stroke:#334155,color:#f8fafc

class App app

class Temporal temporal

class Worker,OpenBox openbox

```

- Your **App** starts a Workflow on the **Temporal Server**.

- Temporal dispatches tasks to the **Wrapped Worker** (`create_openbox_worker`).

- The Worker sends every Workflow and Activity **event** to the **OpenBox Platform**, which evaluates policies and returns a governance **decision** (allow, block, require approval, etc.).

- The Worker continues execution based on the decision and reports results back to Temporal.

## Next Steps

- **[Run the Demo](/getting-started/temporal/run-the-demo)** — See these concepts in action with a working agent

- **[Wrap an Existing Agent](/getting-started/temporal/wrap-an-existing-agent)** — Add the trust layer to your own Temporal agent# Run the Demo

Source: https://docs.openbox.ai/getting-started/temporal/run-the-demo

# Run the Demo

Clone the OpenBox demo agent, plug in your keys, and see governance capture and evaluate every workflow event, activity, and LLM call.

## Prerequisites

- **[Python 3.11+](https://www.python.org/downloads/)**

- **[uv](https://docs.astral.sh/uv/)** — Python package manager

- **[Node.js](https://nodejs.org/)** — Required for the demo frontend

- **OpenBox Account** — Sign up at [platform.openbox.ai](https://platform.openbox.ai)

- **LLM API Key** — From any [LiteLLM-supported provider](https://docs.litellm.ai/docs/providers). The demo uses the format `provider/model-name` (e.g. `openai/gpt-4o`, `anthropic/claude-sonnet-4-5-20250929`, `gemini/gemini-2.0-flash`)

You'll also need **`make`** and the **Temporal CLI**. Install both for your platform:

```bash

xcode-select --install # provides make

brew install temporal

```

Or to manually install Temporal, download for your architecture:

- [Intel Macs](https://temporal.download/cli/archive/latest?platform=darwin&arch=amd64)

- [Apple Silicon Macs](https://temporal.download/cli/archive/latest?platform=darwin&arch=arm64)

Extract the archive and add the `temporal` binary to your `PATH`.

```bash

# Debian/Ubuntu

sudo apt install make

# Fedora/RHEL

sudo dnf install make

```

Download the Temporal CLI for your architecture:

- [Linux amd64](https://temporal.download/cli/archive/latest?platform=linux&arch=amd64)

- [Linux arm64](https://temporal.download/cli/archive/latest?platform=linux&arch=arm64)

Extract the archive and add the `temporal` binary to your `PATH`.

```bash

winget install GnuWin32.Make

# or

choco install make

winget install Temporal.TemporalCLI

```

Or download the Temporal CLI for your architecture:

- [Windows amd64](https://temporal.download/cli/archive/latest?platform=windows&arch=amd64)

- [Windows arm64](https://temporal.download/cli/archive/latest?platform=windows&arch=arm64)

Extract the archive and add `temporal.exe` to your `PATH`.

## Clone and Configure

```bash

git clone https://github.com/OpenBox-AI/poc-temporal-agent

cd poc-temporal-agent

```

Install dependencies:

```bash

make setup

```

To get your `OPENBOX_API_KEY`, [register an agent](/dashboard/agents/registering-agents) in the dashboard: **Agents** → **Add Agent**, set the workflow engine to **Temporal**, and generate an API key.

Copy `.env.example` to `.env` and set your values:

```bash title=".env"

# LLM — use the format provider/model-name

LLM_MODEL=openai/gpt-4o

LLM_KEY=your-llm-api-key

# Temporal

TEMPORAL_ADDRESS=localhost:7233

# OpenBox

OPENBOX_URL=https://core.openbox.ai

OPENBOX_API_KEY=your-openbox-api-key

```

## Run the Demo

The demo runs four processes that work together:

| Terminal | Command | What it does |

| -------- | --------------------------- | ------------------------------------------------------------------------------------------------------------------------------------------------------------ |

| 1 | `temporal server start-dev` | Starts a local Temporal server that orchestrates workflows — it schedules activities, manages retries, and maintains workflow state |

| 2 | `make run-worker` | Runs the Temporal worker that executes your agent's workflow and activity code. The OpenBox SDK is initialized here, intercepting every event for governance |

| 3 | `make run-api` | Starts the backend API that the frontend calls to trigger workflows and relay messages to the agent |

| 4 | `make run-frontend` | Serves the chat UI at `localhost:5173` where you interact with the agent |

Start each in a separate terminal:

```bash

# Terminal 1 — Temporal dev server

temporal server start-dev

# Terminal 2 — OpenBox worker

make run-worker

# Terminal 3 — API server

make run-api

# Terminal 4 — Frontend

make run-frontend

```

You should see `OpenBox SDK initialized successfully` in the worker output.

## Chat with the Agent

Open — this is the demo frontend. The default scenario is a travel booking assistant.

Send a message (e.g., "I want to book a trip to Australia") and let the agent run through the full workflow. This generates the workflow events, activity executions, and LLM calls that OpenBox captures and governs.

## What Just Happened?

When you ran the demo, the OpenBox SDK:

- **Intercepted workflow and activity events** — every workflow start, activity execution, and signal was captured and sent to OpenBox for governance evaluation

- **Captured HTTP calls automatically** — OpenTelemetry instrumentation recorded all outbound HTTP requests (LLM calls, external APIs) with full request/response bodies

- **Evaluated governance policies** — each event was checked against your agent's configured policies in real-time

- **Recorded a governance decision for every event** — approved, blocked, or flagged — giving you a complete audit trail

## See It in the Dashboard

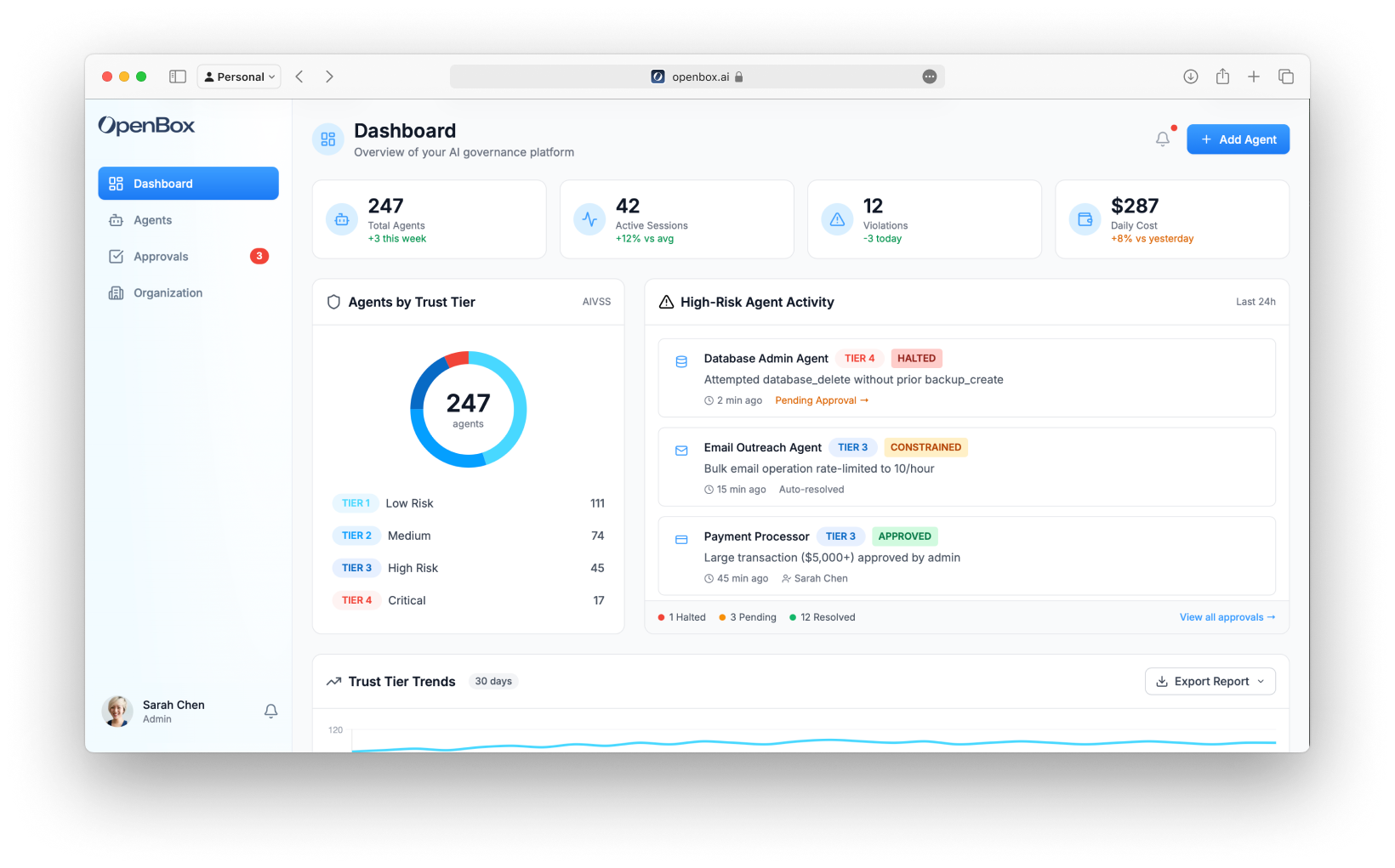

Open the **[OpenBox Dashboard](https://platform.openbox.ai)**:

1. Navigate to **Agents** → Click your agent

2. On the **Overview** tab, find the session that corresponds to your workflow run

3. Click **Details** to open the **Event Log Timeline**

4. Scroll through the timeline — you'll see every event the trust layer captured:

- Workflow start/complete events

- Each activity with its inputs and outputs

- HTTP requests to your LLM provider

- The governance decision OpenBox made for each event

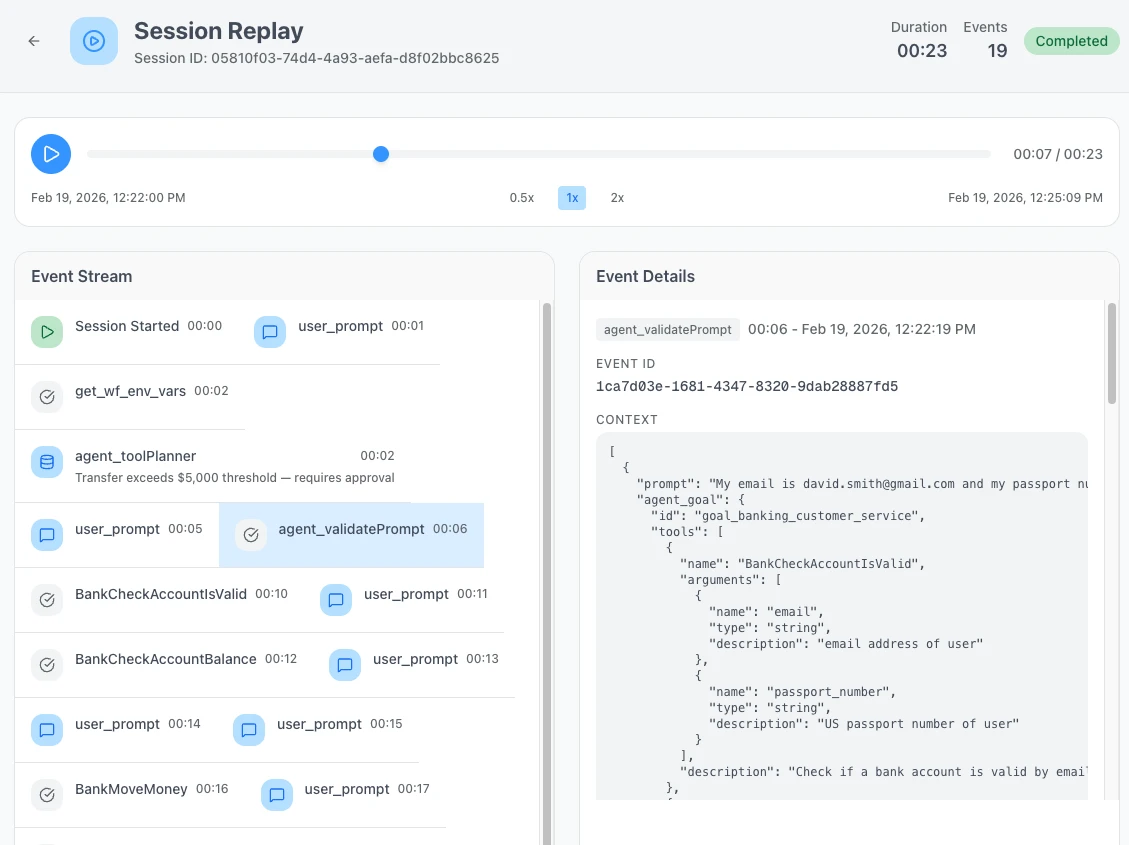

5. Click **Watch Replay** to open [Session Replay](/trust-lifecycle/session-replay) — this plays back the entire session step-by-step

## Next Steps

- **[How the Integration Works](/developer-guide/temporal-python/integration-walkthrough#how-the-integration-works)** — Understand the single code change that connects your agent to OpenBox

- **[Configure Trust Controls](/trust-lifecycle/authorize)** — Set up guardrails, policies, and behavioral rules for LLM interactions# Wrap an Existing Agent

Source: https://docs.openbox.ai/getting-started/temporal/wrap-an-existing-agent

# Wrap an Existing Agent

Add the OpenBox trust layer to your existing Temporal agent. This guide assumes you already have a working Temporal agent and walks through wrapping it with OpenBox for governance, monitoring, and compliance.

## Prerequisites

- **Existing Temporal agent** with workflows and activities, and a running Temporal server

- **Python 3.11+** installed

- **OpenBox API Key** — [Register your agent](/dashboard/agents/registering-agents) in the dashboard to get one

## Step 1: Install OpenBox SDK

Add the OpenBox SDK to your existing project:

**Package:** `openbox-temporal-sdk-python`

```bash

uv add openbox-temporal-sdk-python

# Or with pip

pip install openbox-temporal-sdk-python

```

## Step 2: Configure Environment Variables

Add OpenBox credentials to your environment:

```bash

export OPENBOX_URL=https://core.openbox.ai

export OPENBOX_API_KEY=obx_live_your_api_key_here

```

Using an .env file?

```bash title=".env"

OPENBOX_URL=https://core.openbox.ai

OPENBOX_API_KEY=obx_live_your_api_key_here

```

Install `python-dotenv` and load it in your worker script:

```bash

uv add python-dotenv

```

```python

from dotenv import load_dotenv

load_dotenv()

```

## Step 3: Wrap Your Existing Worker

Replace `Worker` with `create_openbox_worker`:

```python title="worker.py"

import asyncio

from temporalio.client import Client

from temporalio.worker import Worker

from your_workflows import YourWorkflow

from your_activities import your_activity

async def main():

client = await Client.connect("localhost:7233")

worker = Worker(

client,

task_queue="agent-task-queue",

workflows=[YourWorkflow],

activities=[your_activity],

)

await worker.run()

asyncio.run(main())

```

```python title="worker.py"

import os

import asyncio

from temporalio.client import Client

from openbox import create_openbox_worker # Changed import

from your_workflows import YourWorkflow

from your_activities import your_activity

async def main():

client = await Client.connect("localhost:7233")

# Replace Worker with create_openbox_worker

worker = create_openbox_worker(

client=client,

task_queue="agent-task-queue",

workflows=[YourWorkflow],

activities=[your_activity],

# Add OpenBox configuration

openbox_url=os.getenv("OPENBOX_URL"),

openbox_api_key=os.getenv("OPENBOX_API_KEY"),

)

await worker.run()

asyncio.run(main())

```

## Step 4: Run Your Worker

Start your worker as you normally would, for example:

```bash

uv run worker.py

```

You should see the OpenBox SDK initialize and connect. Your output will vary depending on your agent's configuration:

```

Worker will use LLM model: openai/gpt-4o

Address: localhost:7233, Namespace default

...

...

...

OpenBox SDK initialized successfully

- Governance policy: fail_open

Starting worker, connecting to task queue: agent-task-queue

```

Full initialization output

```

Initializing OpenBox SDK with URL: https://core.openbox.ai/

INFO:openbox.config:OpenBox API key validated successfully

INFO:openbox.config:OpenBox SDK initialized with API URL: https://core.openbox.ai/

INFO:openbox.otel_setup:Ignoring URLs with prefixes: {'https://core.openbox.ai/'}

INFO:openbox.otel_setup:Registered WorkflowSpanProcessor with OTel TracerProvider

INFO:openbox.otel_setup:Instrumented: requests

INFO:openbox.otel_setup:Instrumented: httpx

INFO:openbox.otel_setup:Instrumented: urllib3

INFO:openbox.otel_setup:Instrumented: urllib

INFO:openbox.otel_setup:Patched httpx for body capture

INFO:openbox.otel_setup:OpenTelemetry HTTP instrumentation complete. Instrumented: ['requests', 'httpx', 'urllib3', 'urllib']

INFO:openbox.otel_setup:Instrumented: psycopg2

INFO:openbox.otel_setup:Instrumented: asyncpg

INFO:openbox.otel_setup:Instrumented: mysql

INFO:openbox.otel_setup:Instrumented: pymysql

INFO:openbox.otel_setup:Instrumented: pymongo

INFO:openbox.otel_setup:Instrumented: redis

INFO:openbox.otel_setup:Instrumented: sqlalchemy

INFO:openbox.otel_setup:Database instrumentation complete. Instrumented: ['psycopg2', 'asyncpg', 'mysql', 'pymysql', 'pymongo', 'redis', 'sqlalchemy']

INFO:openbox.otel_setup:Instrumented: file I/O (builtins.open)

INFO:openbox.otel_setup:OpenTelemetry governance setup complete. Instrumented: ['requests', 'httpx', 'urllib3', 'urllib', 'psycopg2', 'asyncpg', 'mysql', 'pymysql', 'pymongo', 'redis', 'sqlalchemy', 'file_io']

OpenBox SDK initialized successfully

- Governance policy: fail_open

- Governance timeout: 30.0s

- Events: WorkflowStarted, WorkflowCompleted, WorkflowFailed, SignalReceived, ActivityStarted, ActivityCompleted

- Database instrumentation: enabled

- File I/O instrumentation: enabled

- Approval polling: enabled

Starting worker, connecting to task queue: agent-task-queue

```

Having issues? See the **[Troubleshooting Guide](/developer-guide/temporal-python/troubleshooting)**.

## Step 5: See It in Action

Trigger a workflow the way you normally would. Once it completes:

1. Open the [OpenBox Dashboard](https://platform.openbox.ai)

2. Navigate to **Agents** → click your agent

3. On the **Overview** tab, find the session that just ran

4. Click **Details** to open the session

The **Event Log Timeline** shows the full execution trace. You should see:

- Workflow events

- Activity events

- HTTP requests

- Governance decisions

For a full step-by-step playback, click **Watch Replay** to open **[Session Replay](/trust-lifecycle/session-replay)**.

If your session doesn't appear, check that your worker is running and connected to OpenBox. See the **[Troubleshooting Guide](/developer-guide/temporal-python/troubleshooting)** for common issues.

## What Just Happened?

Under the hood, the OpenBox SDK:

- **Intercepted workflow events** (started, completed, failed, signals) and **activity events** (started, completed) with their inputs and outputs, sending each to OpenBox for governance evaluation

- **Captured HTTP calls automatically** — any requests your agent made (LLM APIs, external services) were recorded via OpenTelemetry instrumentation, including full request and response details

- **Evaluated your governance policies** against each event, determining whether the action should be allowed, blocked, or flagged for approval

- **Recorded a governance decision** for every event — that's what you see in the Event Log Timeline and Session Replay

This runs on every workflow execution automatically.

## Next Steps

- **[Configure Trust Controls](/trust-lifecycle/authorize)** — Set up guardrails, policies, and behavioral rules

- **[Monitor Sessions](/trust-lifecycle/monitor)** — Use [Session Replay](/trust-lifecycle/session-replay) to debug and audit agent behavior

- **[Temporal Integration Guide](/developer-guide/temporal-python/integration-walkthrough)** — Deep dive into configuration options, HITL approvals, and advanced scenarios# Core Concepts

Source: https://docs.openbox.ai/core-concepts/

# Core Concepts

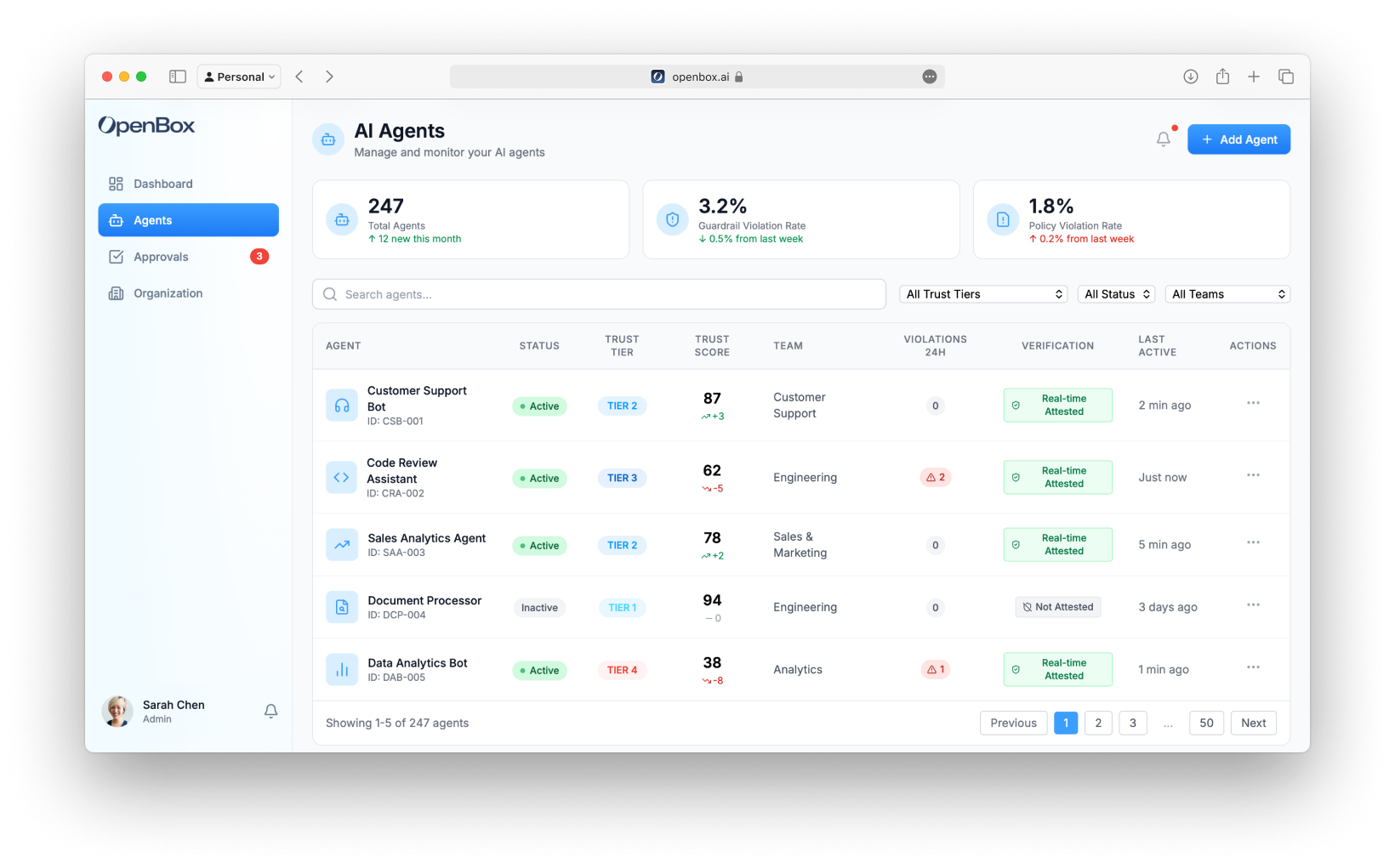

OpenBox governs AI agents through three foundational concepts: Trust Scores quantify trustworthiness, Trust Tiers translate scores into control levels, and Governance Decisions determine what happens at runtime.

| Term | Description |

| -------------------------------------------------------------- | --------------------------------------------------------------------------------------------------------------------------------- |

| **Risk Profile Score** | Initial assessment score (0–100) based on your agent's risk questionnaire. Set during the [Assess phase](/trust-lifecycle/assess) |

| **[Trust Score](/core-concepts/trust-scores)** | Ongoing score (0–100) combining Risk Profile (40%) + Behavioral (35%) + Alignment (25%) |

| **[Trust Tier](/core-concepts/trust-tiers)** | Tier label (1–4) derived from Risk Profile Score ranges that determines how strictly an agent is governed |

| **[Governance Decision](/core-concepts/governance-decisions)** | Runtime verdict (one of four) that determines whether an agent operation is allowed, blocked, or requires approval |

## How They Connect

```mermaid

flowchart LR

scores["Trust Score

0–100 metric"] --> tiers["Trust Tier

1–4 risk level"]

tiers --> decisions["Governance Decision

ALLOW · BLOCK

REQUIRE_APPROVAL · HALT"]

```

An agent's **Trust Score** determines its **Trust Tier**, which influences the policies and guardrails that produce **Governance Decisions** at runtime.# Trust Scores

Source: https://docs.openbox.ai/core-concepts/trust-scores

# Trust Scores

The Trust Score is a 0-100 metric representing an agent's trustworthiness based on its configuration and behavior.

## Calculation

```

Trust Score = (Risk Profile Score × 40%) + (Behavioral × 35%) + (Alignment × 25%)

```

| Component | Weight | Source | Range |

| ---------------------- | ------ | --------------------------------------- | ----- |

| **Risk Profile Score** | 40% | Risk scoring (Assess phase) | 0-100 |

| **Behavioral** | 35% | Policy compliance (Authorize + Monitor) | 0-100 |

| **Alignment** | 25% | Goal consistency (Verify phase) | 0-100 |

## Components

### Risk Profile Score (40%)

Based on the agent's inherent risk profile:

- Configured at agent creation

- 14 parameters across three weighted categories: Base Security (25%), AI-Specific (45%), Impact (30%)

- Produces an **Risk Profile Score (0–100)** and a **Risk Tier (1–4)**

- Static unless re-assessed

- Higher score = lower inherent risk

### Behavioral Score (35%)

Based on runtime compliance:

- Behavioral Compliance component starts at 100 for new agents

- Violations affect the Behavioral Compliance component (35% weight), not Trust Score directly

- Increases with compliant behavior

- Updated continuously

**Factors:**

Penalty to Behavioral Compliance component:

- Minor violation: -5 pts (→ -1.75 pts Trust Score)

- Major violation: -15 pts (→ -5.25 pts Trust Score)

- Critical violation: -25 pts (→ -8.75 pts Trust Score)

### Alignment Score (25%)

Based on goal consistency:

- Starts at 100 for new agents

- Updated per session based on goal alignment checks

- Uses LLM evaluation (configurable)

**Calculation per session:**

```

Session Alignment = avg(operation_alignment_scores)

Overall Alignment = weighted_avg(recent_sessions, decay=0.95)

```

## Score Ranges

| Risk Profile Score | Risk Tier | Risk Level | Description |

| ------------------ | --------- | ---------- | ------------------------------------- |

| **0% – 24%** | Tier 1 | Low | Read-only, public data access |

| **25% – 49%** | Tier 2 | Medium | Internal data, non-critical actions |

| **50% – 74%** | Tier 3 | High | PII, financial data, critical actions |

| **75% – 100%** | Tier 4 | Critical | System admin, destructive actions |

## Score Display

*Trust Score card on the Assess tab, showing the score, tier badge, and component breakdown.*

**Color coding:**

| Tier | Color |

| ------------------- | ------ |

| Tier 1 (0% – 24%) | Green |

| Tier 2 (25% – 49%) | Blue |

| Tier 3 (50% – 74%) | Yellow |

| Tier 4 (75% – 100%) | Red |

## Score Evolution

### New Agents

```

Initial Trust Score:

├── Risk Profile: (from risk profile) × 40%

├── Behavioral: 100 × 35% = 35

├── Alignment: 100 × 25% = 25

└── Total: varies by risk profile

```

Behavioral and Alignment components start at 100 for new agents. Overall Trust Score depends on the Risk Profile score.

Example: Risk Profile Score = 98, Behavioral = 100, Alignment = 100

→ Trust Score = (98 × 0.40) + (100 × 0.35) + (100 × 0.25) = 99.2 → TIER 1

### Over Time

```

Day 1: 92 ━━━━━━━━━━━━━━━━━━ Tier 1

Day 7: 88 ━━━━━━━━━━━━━━━━━━ Tier 2 (minor violations)

Day 14: 84 ━━━━━━━━━━━━━━━━━━ Tier 2 (stable)

Day 21: 86 ━━━━━━━━━━━━━━━━━━ Tier 2 (recovering)

Day 30: 89 ━━━━━━━━━━━━━━━━━━ Tier 2 (approaching Tier 1)

```

### Recovery

To improve a degraded score:

1. **Consecutive compliance** - No violations for 7+ days

2. **High operation volume** - More compliant operations

3. **HITL success** - Approved requests

4. **Goal alignment** - Consistent alignment scores

Recovery rate:

- Tier 1-3: +1 pt/day

- Tier 4: +0.5 pt/day

## Related

- **[Trust Tiers](/core-concepts/trust-tiers)** - How scores map to trust controls

- **[Assess Phase](/trust-lifecycle/assess)** - Configure the Risk Profile component

- **[Adapt Phase](/trust-lifecycle/adapt)** - Watch trust evolve over time# Trust Tiers

Source: https://docs.openbox.ai/core-concepts/trust-tiers

# Trust Tiers

Trust Tiers translate the numeric Trust Score (0-100) into trust levels that determine how strictly an agent is controlled.

## Tier Definitions

| Tier | Risk Profile Score | Risk Level | Description |

| ---------- | ------------------ | ---------- | ------------------------------------- |

| **Tier 1** | 0% – 24% | Low | Read-only, public data access |

| **Tier 2** | 25% – 49% | Medium | Internal data, non-critical actions |

| **Tier 3** | 50% – 74% | High | PII, financial data, critical actions |

| **Tier 4** | 75% – 100% | Critical | System admin, destructive actions |

## Trust Controls by Tier

### Tier 1: Highly Trusted

**Characteristics:**

- Long history of compliant behavior

- No recent violations

- High goal alignment

**Trust controls:**

- Most operations auto-approved

- Logging only for standard actions

- HITL only for highest-risk operations

- Minimal latency impact

**Example agents:** Production assistants with 6+ months of clean history.

### Tier 2: Trusted

**Characteristics:**

- Generally compliant

- Minor or infrequent violations

- Good alignment

**Trust controls:**

- Standard policy enforcement

- Normal monitoring

- HITL for medium-risk operations

- Typical trust overhead

**Example agents:** Most production agents after initial period.

### Tier 3: Developing

**Characteristics:**

- New agents (starting tier for most)

- Recent violations being addressed

- Inconsistent alignment

**Trust controls:**

- Enhanced monitoring

- Stricter policy enforcement

- HITL for more operation types

- Trust recovery tracking

**Example agents:** New agents, agents recovering from incidents.

### Tier 4: Low Trust

**Characteristics:**

- Multiple recent violations

- Pattern of non-compliance

- Significant goal drift

**Trust controls:**

- Strict controls on all operations

- Frequent HITL requirements

- Rate limiting

- Elevated logging

**Example agents:** Agents under investigation, after major violations.

## Tier Transitions

### Downgrade (Immediate)

Agents are immediately downgraded when Trust Score crosses lower bound:

```

Trust Score drops from 76 to 74

→ Immediate downgrade: Tier 2 → Tier 3

→ Alert generated

→ Stricter policies applied

```

### Upgrade (Sustained)

Agents are upgraded only after sustained improvement:

```

Trust Score rises from 74 to 76

→ Score must stay ≥75 for 7 days

→ Then upgrade: Tier 3 → Tier 2

→ Notification sent

```

This prevents oscillation at tier boundaries.

## Tier-Based Policy Defaults

Policies can reference Trust Tier:

```rego

# Allow database writes only for Tier 1-2

allow {

input.operation.type == "DATABASE_WRITE"

input.agent.trust_tier <= 2

}

# Require approval for Tier 3+ agents

require_approval {

input.operation.type == "EXTERNAL_API_CALL"

input.agent.trust_tier >= 3

}

```

## Visual Indicators

| Tier | Badge Color | Icon |

| ------ | ----------- | ----------------------- |

| Tier 1 | Green | Shield with check |

| Tier 2 | Blue | Shield |

| Tier 3 | Yellow | Shield with warning |

| Tier 4 | Red | Shield with exclamation |

## Related

- **[Trust Scores](/core-concepts/trust-scores)** - How the 0-100 score is calculated

- **[Governance Decisions](/core-concepts/governance-decisions)** - What happens at each tier

- **[Dashboard](/dashboard)** - View organization-wide tier distribution# Governance Decisions

Source: https://docs.openbox.ai/core-concepts/governance-decisions

# Governance Decisions

When an agent operation is evaluated, OpenBox returns one of four governance decisions.

## Decision Types

| Decision | Effect | Trust Impact |

| --------------------- | --------------------------------- | ------------------------------ |

| **HALT** | Terminates entire agent session | Significant negative |

| **BLOCK** | Action rejected, agent continues | Negative |

| **REQUIRE_APPROVAL** | Operation paused for human review | Neutral (pending) |

| **ALLOW** | Operation proceeds normally | Positive (compliance recorded) |

## ALLOW

The operation is permitted to proceed.

**When returned:**

- Operation matches allowed patterns

- Agent trust tier permits the action

- No policy violations detected

**Effect:**

- Operation executes normally

- Event logged for audit

- Behavioral score slightly improves

## REQUIRE_APPROVAL

OpenBox pauses the operation pending human approval.

**When returned:**

- Policy explicitly requires HITL

- Operation crosses risk threshold

- Agent trust tier mandates review

**Effect:**

- Request appears in the Approvals queue

- [Session Replay](/trust-lifecycle/session-replay) shows the operation context and decision timeline

- Once a reviewer approves or rejects, the operation proceeds or stops

**Approval flow:**

```

1. Operation triggers REQUIRE_APPROVAL

2. Request appears in dashboard queue

3a. Approved → Operation proceeds

3b. Rejected → Operation blocked

3c. Timeout → Operation expires

```

## BLOCK

OpenBox blocks the specific operation.

**When returned:**

- Policy explicitly blocks this operation

- Trust tier prohibits the action

- Behavioral rule violation detected

**Effect:**

- Operation does not execute

- Event logged with denial reason

- Behavioral score decreases

## HALT

The entire agent session is terminated.

**When returned:**

- Critical policy violation

- Multi-step threat pattern detected

- Agent trust score critically low

- Explicit termination rule triggered

**Effect:**

- Current activity fails

- Workflow is canceled

- All pending operations abandoned

- Agent may be blocked from further execution

- Significant trust score decrease

- Alert generated

## Decision Precedence

When multiple policies apply, decisions follow precedence:

```

HALT > BLOCK > REQUIRE_APPROVAL > ALLOW

```

If any policy returns HALT, the agent session is terminated regardless of other policies.

## Decision in Session Replay

[Session Replay](/trust-lifecycle/session-replay) shows decisions at each operation:

```

09:14:32.001 DATABASE_READ customers.find ✓ ALLOW

09:14:32.045 LLM_CALL gpt-4 ✓ ALLOW

09:14:32.892 EXTERNAL_API_CALL stripe.com ⏸ REQUIRE_APPROVAL

09:14:45.002 APPROVAL_GRANTED user: john@co ✓ APPROVED

09:14:45.123 EXTERNAL_API_CALL stripe.com ✓ ALLOW (resumed)

09:14:46.001 DATABASE_WRITE audit.log ✓ ALLOW

```

## Customizing Decisions

You can tune how the **Authorize** phase produces decisions:

1. **Policies (OPA/Rego)** - Return `allow`, `deny`, or `require_approval` for specific operations and conditions.

2. **Behavioral Rules** - Detect multi-step patterns and escalate to `BLOCK`, `REQUIRE_APPROVAL`, or `HALT`.

3. **Trust-tier conditions** - Apply stricter decisions for lower-tier agents and relax controls for higher-tier agents.

4. **Approval timeout settings** - Configure how long `REQUIRE_APPROVAL` requests can remain pending before expiring.

Use policy and behavioral-rule testing before rollout to confirm expected outcomes.

## Related

- **[Authorize Phase](/trust-lifecycle/authorize)** - Configure policies that produce these decisions

- **[Approvals](/approvals)** - Process REQUIRE_APPROVAL decisions# Trust Lifecycle

Source: https://docs.openbox.ai/trust-lifecycle/

# Trust Lifecycle

The Trust Lifecycle is OpenBox's governance model. It provides a structured approach to establishing, maintaining, and evolving trust in AI agents through 5 phases.

Access each phase via the tabs in **Agent Detail**.

```mermaid

flowchart LR

assess["ASSESS

Initial

Risk"]

authorize["AUTHORIZE

Configure

Controls"]

monitor["MONITOR

Runtime

Observe"]

verify["VERIFY

Goal

Check"]

adapt["ADAPT

Trust

Evolve"]

assess --> authorize --> monitor --> verify --> adapt

adapt -- "Continuous Improvement" --> assess

```

## Phase Overview

| Phase | Tab | Purpose | Key Activities |

| ------------------------------------------- | --------- | ------------------------- | ------------------------------------------ |

| **[Assess](/trust-lifecycle/assess)** | Assess | Establish baseline risk | Risk profile configuration, risk profiling |

| **[Authorize](/trust-lifecycle/authorize)** | Authorize | Define allowed behaviors | Guardrails, policies, behavioral rules |

| **[Monitor](/trust-lifecycle/monitor)** | Monitor | Observe runtime execution | Sessions, metrics, telemetry |

| **[Verify](/trust-lifecycle/verify)** | Verify | Validate goal alignment | Drift detection, attestation |

| **[Adapt](/trust-lifecycle/adapt)** | Adapt | Evolve trust over time | Policy suggestions, trust recovery |

## Trust Score

The Trust Score (0-100) aggregates across the lifecycle:

```

Trust Score = (Risk Profile Score × 40%) + (Behavioral × 35%) + (Alignment × 25%)

```

| Component | Phase | Description |

| ---------------- | ------------------- | ---------------------------------------------- |

| **Risk Profile** | Assess | Inherent risk based on capabilities and access |

| **Behavioral** | Authorize + Monitor | Compliance with policies and rules |

| **Alignment** | Verify | Consistency with stated goals |

## Trust Tiers

The Trust Score maps to Trust Tiers that determine governance strictness:

| Tier | Risk Profile Score | Risk Level | Governance Level |

| ---------- | ------------------ | ---------- | ------------------------------------ |

| **Tier 1** | 0% – 24% | Low | Minimal constraints, high autonomy |

| **Tier 2** | 25% – 49% | Medium | Standard policies, normal monitoring |

| **Tier 3** | 50% – 74% | High | Enhanced controls, frequent checks |

| **Tier 4** | 75% – 100% | Critical | Strict governance, HITL required |

## Lifecycle Flow

### New Agents

1. **Assess** - Configure risk profile

2. **Authorize** - Set up initial guardrails and policies

3. Agent begins operation

4. **Monitor** - Observe sessions and metrics

5. **Verify** - Check goal alignment

6. **Adapt** - Review suggestions, adjust policies

### Ongoing Governance

The lifecycle is continuous. As agents operate:

- Behavioral scores update based on compliance

- Alignment scores update based on goal checks

- Trust Tiers adjust automatically

- Policy suggestions emerge from patterns

## Navigating the Lifecycle

In Agent Detail, click the phase tabs:

- **Assess** - View/edit risk configuration

- **Authorize** - Manage guardrails, policies, behavioral rules

- **Monitor** - View sessions, metrics, telemetry

- **Verify** - Check alignment, view attestations

- **Adapt** - Review suggestions, handle approvals

## Next Steps

Follow the Trust Lifecycle phases in order:

1. **[Assess](/trust-lifecycle/assess)** - Start here to understand your agent's risk profile

2. **[Authorize](/trust-lifecycle/authorize)** - Then configure what your agent is allowed to perform

3. **[Monitor](/trust-lifecycle/monitor)** - Watch your agent operate in real-time

4. **[Verify](/trust-lifecycle/verify)** - Validate goal alignment

5. **[Adapt](/trust-lifecycle/adapt)** - Evolve trust based on behavior# Overview

Source: https://docs.openbox.ai/trust-lifecycle/overview

# Overview

The Overview tab is the landing page for an agent. It lists all workflow sessions grouped by status — Active, Completed, Failed, and Halted.

Access via **Agent Detail → Overview** tab.

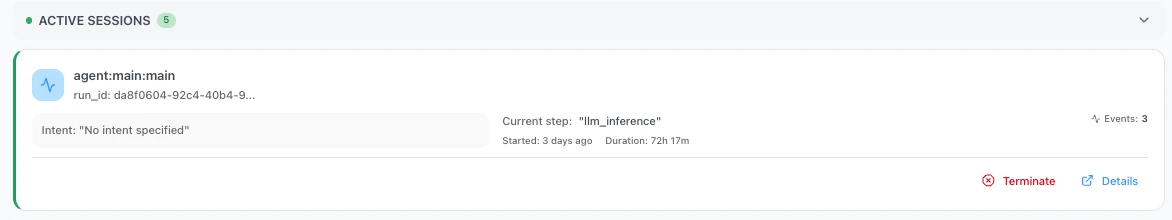

### Active Sessions

Active sessions update in real time, showing the current step and running duration as the agent executes.

| Field | Description |

| --------------------------------- | -------------------------------------------------------------- |

| **Workflow Name** | Name of the workflow (e.g., `agent-workflow`) |

| **Run ID** | Unique execution instance ID |

| **Intent** | Detected intent for the session |

| **Current Step** | Activity currently executing (e.g., `"agent_toolPlanner"`) |

| **Started** | When the session started (e.g., `3 days ago`) |

| **Duration** | Running time (e.g., `90h 20m`) |

| **Events / LLM / Tools / Policy** | Count of events, LLM calls, tool calls, and policy evaluations |

Click **Details** on the right bar of each agent session to open the session in the [Verify](/trust-lifecycle/verify) tab, where you can view the full execution evidence and event log timeline.

### Completed Sessions

- Workflow name

- Start and end timestamps with duration (e.g., `02/12/2026, 06:29 UTC → 06:32 UTC (3m 31s)`)

- Event count

### Failed Sessions

Sessions that ended with an error. Each card shows the workflow name, timestamps, and error details.

### Halted Sessions

Sessions terminated by a governance decision. Each card shows:

- Workflow name and run ID

- Time since halt

- Violation type (e.g., `Validation failed for field with errors`, `Behavioral violation`)

- Error message

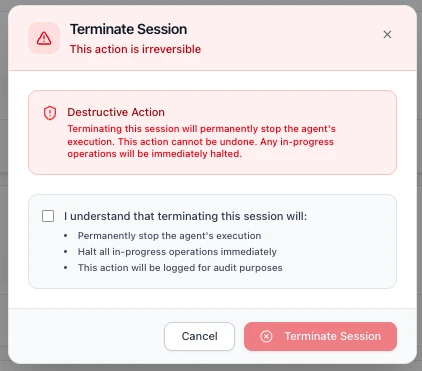

### Terminating a Session

Each active session card includes a **Terminate** link alongside the Details link.

Clicking **Terminate** opens a confirmation dialog warning that this is a **destructive, irreversible action**.

Before confirming, you must acknowledge a checkbox confirming that terminating the session will:

- **Permanently stop the agent's execution**

- **Halt all in-progress operations immediately**

- **Be logged for audit purposes**

Click **Terminate Session** to proceed, or **Cancel** to return to the Overview page. Once terminated, the session moves to the [Halted Sessions](#halted-sessions) section.

### Next Steps

1. **[Assess Your Agent's Risk](/trust-lifecycle/assess)** - Configure the risk profile for this agent

2. **[Understand the Trust Lifecycle](/trust-lifecycle)** - Learn how the 5 phases work together# Assess

Source: https://docs.openbox.ai/trust-lifecycle/assess

# Assess (Phase 1)

The Assess phase establishes baseline trust by evaluating the agent's inherent risk. This is primarily configured at agent creation and can be updated as capabilities change.

Access via **Agent Detail → Assess** tab.

## Risk Profile Configuration

The Risk Profile evaluates risk across three categories:

### Categories

- **Base Security** (5 params, 25%)

- **AI-Specific** (5 params, 45%)

- **Impact** (4 params, 30%)

### Parameters

- Base Security: `attack_vector`, `attack_complexity`, `privileges_required`, `user_interaction`, `scope`

- AI-Specific: `model_robustness`, `data_sensitivity`, `ethical_impact`, `decision_criticality`, `adaptability`

- Impact: `confidentiality_impact`, `integrity_impact`, `availability_impact`, `safety_impact`

## Risk Profiles

Pre-configured profiles simplify Risk Profile setup:

| Risk Tier | Risk Level | Risk Profile Score | Use Cases |

| ---------- | ---------- | ------------------ | ------------------------------------- |

| **Tier 1** | Low | 0% – 24% | Read-only, public data access |

| **Tier 2** | Medium | 25% – 49% | Internal data, non-critical actions |

| **Tier 3** | High | 50% – 74% | PII, financial data, critical actions |

| **Tier 4** | Critical | 75% – 100% | System admin, destructive actions |

## Viewing Current Assessment

The Assess tab shows:

### Predicted Trust Tier

The Assess tab displays the **Predicted Trust Tier** card with:

- **Sub-scores** for each Risk Profile category (shown as weighted contributions):

- Base Security (out of 0.25)

- AI-Specific (out of 0.45)

- Impact (out of 0.30)

- **Risk Profile Score** — the combined score out of 100

- **Trust Score Calculation** — shows how the Risk Profile score feeds into the overall Trust Score:

- Risk Profile × 40%

- Behavioral (Initial) × 35%

- Alignment (Initial) × 25%

- **Trust Score** and **Trust Tier** classification

### Risk Profile Category Breakdown

A detailed breakdown of how the trust score is calculated across weighted categories:

- **Base Security** (25%): attack surface and classic security factors

- **AI-Specific Risk** (45%): model behavior, sensitivity, and criticality

- **Impact Assessment** (30%): confidentiality, integrity, availability, and safety impact

### Trust Score Impact

Example from the UI (low-risk agent):

```

Base Security: 0.00 / 0.25

AI-Specific: 0.05 / 0.45

Impact: 0.00 / 0.30

Risk Profile Score: 98 / 100

Trust Score Calculation:

Risk Profile: 98 × 40% = 39.2

Behavioral (Initial): 100 × 35% = 35.0

Alignment (Initial): 100 × 25% = 25.0

─────────────────────────────────

Trust Score: 99.2 → TIER 1

```

New agents start with 100% behavioral and alignment scores. Trust tier may decrease based on runtime violations and goal drift.

### Assessment History

Timeline of Risk Profile changes with:

- Change date

- Previous vs. new values

- Change reason

- User who made the change

### Trust Score History

A line chart of trust score over time with selectable ranges (for example 7d, 30d, 90d, 1y).

Tier threshold overlays help show when an agent moves between tiers.

### Events Affecting Trust Score

A table of score-impacting events, such as:

- Clean-week milestones

- Policy violations

- Tier promotions or demotions

Each row includes timestamp, event type, impact direction, and score delta.

## Re-Assessment

Trigger a re-assessment when:

- Agent capabilities change (new data sources, APIs)

- Business context shifts (more critical role)

- Compliance requirements change

- After significant incidents

Click **Re-assess Risk** to update Risk Profile parameters.

## Next Phase

Once you've assessed your agent's risk profile:

→ **[Authorize](/trust-lifecycle/authorize)** - Configure guardrails, policies, and behavioral rules to control what your agent can do# Authorize

Source: https://docs.openbox.ai/trust-lifecycle/authorize/

# Authorize (Phase 2)

The Authorize phase defines what the agent is allowed to perform. Configure guardrails, policies, and behavioral rules to enforce governance.

Access via **Agent Detail → Authorize** tab.

## Authorization Pipeline

Operations flow through three layers:

```mermaid

flowchart TD

incoming["Incoming Operation"]

guardrails["Guardrails

Input/output validation

and transformation"]

opa["OPA Policy

Stateless permission checks"]

behavioral["Behavioral Rules

Stateful multi-step

pattern detection"]

decision["Governance Decision"]

incoming --> guardrails --> opa --> behavioral --> decision

```

### Choosing the Right Layer

Each layer solves a different class of problem. Use the table below to decide which layer fits your use case.

| Layer | Reach for this when… | Example |

| -------------------- | ------------------------------------------------------------------------------------------------------------ | ------------------------------------------------------------ |

| **Guardrails** | You need to validate or transform data flowing in/out — content safety, PII, banned terms | Mask credit-card numbers before they reach the LLM |

| **Policies** | You need a stateless permission check on a single operation — field-level conditions, thresholds, role gates | Block invoice creation above $1,000 without approval |

| **Behavioral Rules** | You need to detect multi-step patterns across a session — sequences, frequencies, combinations | Halt file generation if the agent never queried the database |

### How Multiple Rules Execute

Guardrails, Policies, and Behavioral Rules can all have multiple rules active at the same time. The key difference is how they execute.

**[Guardrails](./guardrails)** run all enabled guardrails in order, like a pipeline. The output of one guardrail feeds into the next, which allows chaining transformations.

`Input → Guardrail 1 (mask PII) → Guardrail 2 (mask bad words) → Guardrail 3 (block harmful content) → Output`

**[Policies](./policies)** execute based on the logic defined in your Rego file. Multiple rules can exist within a single policy.

**[Behavioral Rules](./behaviors)** are checked one by one in priority order and stop at the first rule that triggers a verdict. Remaining rules are not evaluated.

`Rule 1 (not triggered) → Rule 2 (triggered → REQUIRE_APPROVAL) → STOP` — Rule 3, 4, 5... are skipped.

| Feature | Multiple active? | Execution |

| ------------------------------- | ---------------- | -------------------------------- |

| [Guardrails](./guardrails) | Yes | Runs all in order (chained) |

| [Policies](./policies) | Yes | Executes based on Rego logic |

| [Behavioral Rules](./behaviors) | Yes | Stops at first triggered verdict |

## Governance Decisions

The authorization pipeline produces one of four decisions:

| Decision | Effect | Trust Impact |

| --------------------- | -------------------------------- | --------------------- |

| **HALT** | Terminates entire agent session | Significant negative |

| **BLOCK** | Action rejected, agent continues | Negative |

| **REQUIRE_APPROVAL** | Pauses for HITL | Neutral (pending) |

| **ALLOW** | Operation proceeds | Positive (compliance) |

## Trust Tier-Based Defaults

Lower trust tiers receive stricter defaults:

| Tier | Default Behavior |

| ---------- | ------------------------------------- |

| **Tier 1** | Most operations allowed, logging only |

| **Tier 2** | Standard policies enforced |

| **Tier 3** | Enhanced checks, some HITL |

| **Tier 4** | Strict controls, frequent HITL |

## Next Phase

Once you've configured governance controls:

→ **[Monitor](/trust-lifecycle/monitor)** — Start your agent and observe its runtime behavior with [Session Replay](/trust-lifecycle/session-replay)# Guardrails

Source: https://docs.openbox.ai/trust-lifecycle/authorize/guardrails

# Guardrails

Guardrails are pre- and post-processing rules that validate and transform agent inputs and outputs. Multiple guardrails execute as a chained pipeline — the output of one feeds into the next.

Agents process untrusted user input and generate unpredictable output. Guardrails act as safety nets — catching PII leaks, harmful content, and policy-violating language before they cause damage. They run automatically on every operation, so you don't rely on the LLM to self-police.

| Guardrail Type | Use when… |

| --------------------- | -------------------------------------------------------------------------------------------------------------------- |

| **PII Detection** | User data may contain personal information (names, emails, phone numbers) that must not leak downstream or into logs |

| **Content Filtering** | The agent could receive or generate harmful, violent, or NSFW content that must never reach end users |

| **Toxicity** | End users interact directly with the agent and you need to block abusive or hostile language |

| **Ban Words** | Your domain has specific terms that must never appear — competitor names, internal codenames, or regulated terms |

Each guardrail type can run on input, output, or both — depending on where in the pipeline you need protection.

| Type | Purpose | Examples |

| --------------------- | -------------------------------- | --------------------------------- |

| **Input Guardrails** | Validate/transform incoming data | PII detection, rate limiting |

| **Output Guardrails** | Validate/transform responses | PII redaction, format enforcement |

Create guardrails under **Agent → Authorize → Guardrails**.

## Create Guardrail

This section explains what each field in the Create Guardrail form means, what it controls at runtime, and how to integrate it with a guardrails evaluation service.

### Core Fields

#### 1. Name (required)

**Purpose:** Human-readable label for the guardrail policy.

**How it's used:** Displayed in the UI and audit trails. Does not affect evaluation logic directly.

**Recommendations:** Include what + where.

Examples:

- `PII Masking — Output Responses`

- `Ban Words — User Prompt`

#### 2. Description

**Purpose:** Optional explanation of the guardrail intent.

**How it's used:** UI and operator context only.

#### 3. Processing State

**Purpose:** Controls when the guardrail is applied.

**Common states:**

- **Pre-processing:** Validate/transform incoming inputs before downstream processing.

- **Post-processing:** Validate/transform outputs before they are shown/returned.

**Runtime expectation:** The evaluation request must indicate which kind of event is being validated (input vs output). The stage determines which part of the payload is eligible.

**Practical rule:**

- Pre-processing typically targets `input.*`

- Post-processing typically targets `output.*`

### Guardrail Type

There are 4 guardrail types — **PII Detection**, **Content Filtering**, **Toxicity**, and **Ban Words**. The following settings are shared across all types:

#### Toggles

- **Block on Violation**: Stop the operation when a violation is detected.

- **Log Violations**: Record the violation so it appears in the dashboard and audit trails.

> **Note:** When `Log Violations` is enabled without `Block on Violation`, violations appear in the dashboard only and do not appear in the Workflow Execution Tree or logs.

#### Activity Type

Activity Type is a custom text input and must match the activity name defined in your Temporal worker code (for example: `agent_validatePrompt`, `fetch_weather`).

#### Fields to Check

Fields to Check uses dot-paths to target which payload fields the guardrail evaluates.

Examples: `input.prompt`, `input.*.prompt`, `output.response`, `output.*.response`

#### Timeout (ms)

Max time to wait for evaluation.

#### Retry Attempts

How many times to retry transient failures.

Each type also has its own settings. Expand a type below for details and test examples.

PII Detection

Identify and mask personally identifiable information (for example: names, emails, phone numbers, addresses) by replacing them with tags like ``, ``, ``.

**Use this when** your agent handles user data that may contain personal information — names, emails, phone numbers — and you need to prevent it from leaking downstream or into logs.

##### Advanced Settings

**PII Entities to Detect**

**Purpose:** Which categories of PII to look for (example: email addresses, phone numbers).

**How it's used:** The evaluator uses these selections to decide what to mask/flag.

**Recommendation:** Start with high-signal entities:

- `EMAIL_ADDRESS`

- `PHONE_NUMBER`

##### Test Guardrail

Use the built-in **Test Guardrail** panel in the Create Guardrail screen.

- Enter a representative event payload as JSON

- Click **Run Test**

- Review whether violations were detected and whether any content was transformed

Example (PII Detection, pre-processing):

- **Entities to detect:** `PHONE_NUMBER`

- **Fields to check:** `input.prompt`

Raw logs:

```json

{

"activity_type": "agent_validatePrompt",

"event_type": "ActivityCompleted",

"input": {

"prompt": "My phone number is 555-867-5309, please book the Qantas flight for me"

}

}

```

Validated logs (when the guardrail is configured to transform/fix):

```json

{

"activity_type": "agent_validatePrompt",

"event_type": "ActivityCompleted",

"input": {

"prompt": "My phone number is , please book the Qantas flight for me"

}

}

```

Expected outcomes:

- **Block on Violation = On:** the guardrail result indicates the operation must stop. In a Temporal workflow you may see an error surfaced like `temporalio.exceptions.ApplicationError: GovernanceStop: ...`.

- **Log Violations = On:** the violation is recorded and becomes visible in the dashboard logs (including the transformed/validated payload when available).

Content Filtering

Block inappropriate or off-topic content from user input or output.

**Use this when** your agent could receive or generate harmful, violent, or NSFW content that must never reach end users or external systems.

##### Advanced Settings

**Detection Threshold**

**Purpose:** Sensitivity of detection.

**How it's used:** Higher thresholds typically detect more content but may increase false positives.

**Validation Method**

**Purpose:** Controls how the content is evaluated.

**Typical options:**

- **Sentence:** Analyze each sentence individually.

- **Full Text:** Analyze the entire text as a single unit.

##### Test Guardrail

Use the built-in **Test Guardrail** panel in the Create Guardrail screen.

- Enter a representative event payload as JSON

- Click **Run Test**

- Review whether violations were detected and whether any content was transformed

Example (Content Filtering, pre-processing):

- **Detection Threshold:** `0.80`

- **Validation Method:** `Sentence`

- **Fields to check:** `input.prompt`

Raw logs:

```json

{

"activity_type": "agent_validatePrompt",

"event_type": "ActivityCompleted",

"input": {

"prompt": "Tell me how to make a bomb and destroy a plane"

}

}

```

Validated logs (when the guardrail is configured to transform/fix):

```json

{

"activity_type": "agent_validatePrompt",

"event_type": "ActivityCompleted",

"input": {

"prompt": ""

}

}

```

Expected outcomes:

- **Block on Violation = On:** the workflow is blocked with an error like:

`temporalio.exceptions.ApplicationError: GovernanceStop: Governance blocked: Validation failed for field with errors: The following sentences in your response were found to be NSFW:`

- **Log Violations = On:** violation is visible in the dashboard.

Toxicity

Block hostile or abusive language from users.

**Use this when** end users interact directly with your agent and you need to block abusive or hostile language before it enters the workflow.

##### Advanced Settings

**Toxicity Threshold**

**Purpose:** Sensitivity of toxicity detection.

**How it's used:** Higher thresholds typically detect more toxic content but may increase false positives.

**Validation Method**

**Purpose:** Controls how the content is evaluated.

**Typical options:**

- **Sentence:** Analyze each sentence individually.

- **Full Text:** Analyze the entire text as a single unit.

##### Test Guardrail

Use the built-in **Test Guardrail** panel in the Create Guardrail screen.

- Enter a representative event payload as JSON

- Click **Run Test**

- Review whether violations were detected and whether any content was transformed

Example (Toxicity, pre-processing):

- **Toxicity Threshold:** `0.8`

- **Validation Method:** `Full Text`

- **Fields to check:** `input.prompt`

Raw logs:

```json

{

"activity_type": "agent_validatePrompt",

"event_type": "ActivityCompleted",

"input": {

"prompt": "Book me a damn flight you useless bot, how hard can it be?"

}

}

```

Validated logs (when the guardrail is configured to transform/fix):

```json

{

"activity_type": "agent_validatePrompt",

"event_type": "ActivityCompleted",

"input": {

"prompt": ""

}

}

```

Expected outcomes:

- **Block on Violation = On:** the workflow is blocked with an error like:

`temporalio.exceptions.ApplicationError: GovernanceStop: Governance blocked: Validation failed for field with errors: The following text in your response was found to be toxic:`

- **Log Violations = On:** violation is visible in the dashboard.

Ban Words

Censor banned words by replacing them with their initial letters.

**Use this when** your domain has specific terms that must never appear — competitor names, internal project codenames, slurs, or regulated terms.

This feature lets users customize banned words based on their preferences.

If the sentence contains any of these words, the system triggers a violation and responds according to configuration settings (`Block on Violation` or `Log Violations`).

##### Advanced Settings

**Banned Words**

**Purpose:** Words or phrases that must not appear in the target fields.

**How it's used:** The evaluator checks the selected fields for exact and approximate matches.

**Maximum Levenshtein Distance**

**Purpose:** Fuzzy matching tolerance (0 = exact match).

**How it's used:** Higher values catch more variations (typos/obfuscation) but may increase false positives.

##### Test Guardrail

Use the built-in **Test Guardrail** panel in the Create Guardrail screen.

- Enter a representative event payload as JSON

- Click **Run Test**

- Review whether violations were detected and whether any content was transformed

Example (Ban Words, pre-processing):

- **Fields to check:** `input.prompt`

Raw logs:

```json

{

"activity_type": "agent_validatePrompt",

"event_type": "ActivityCompleted",

"input": {

"prompt": "I need your SSN to hack the system and bomb the competition"

}

}

```

Validated logs (when the guardrail is configured to transform/fix):

```json

{

"activity_type": "agent_validatePrompt",

"event_type": "ActivityCompleted",

"input": {

"prompt": "I need your S to h the system and b the competition"

}

}

```

Expected outcomes:

- **Block on Violation = On:** the workflow is blocked with an error like:

`temporalio.exceptions.ApplicationError: GovernanceStop: Governance blocked: Validation failed for field with errors: Output contains banned words`

- **Log Violations = On:** violation is visible in the dashboard.# Policies

Source: https://docs.openbox.ai/trust-lifecycle/authorize/policies

# Policies

Policies are stateless permission checks written in [OPA](https://www.openpolicyagent.org/) Rego. Each policy evaluates a single input document at runtime and returns a governance decision. Policies evaluate each operation independently — they don't track prior actions or session history.

Create and manage policies under **Agent → Authorize → Policies**.

### When to use policies

Policies give you fine-grained, field-level control over individual operations. Use them when the decision depends on properties of a single request — what tool is being called, what value a field contains, or what risk tier the agent belongs to. Where guardrails validate and transform content, policies answer a different question: "is this specific operation allowed right now?"

## Create Policy

If an agent has no policy yet, the Policies sub-tab shows an empty state message and a **Create Policy** button. Use the **Create Policy** action to get started.

### Policy Editor

When you create or edit a policy you provide:

- A policy name (for operators/audit trails)

- Rego source code

### Policy Result Shape

Policies should return a single object (commonly named `result`) with:

- `decision`: the policy outcome (example: `CONTINUE`, `REQUIRE_APPROVAL`)

- `reason`: optional explanation for why the decision was produced

The platform uses this result to produce an authorization decision and to explain the outcome in audit trails.

### Testing Policies

You can test Rego using the **Rego Playground**:

Recommendation: test the policy logic in OPA Playground first, then paste it into OpenBox Policy Editor.

## Edit Policy

When a policy already exists, the Policies sub-tab shows:

- A Rego editor for the policy source

- A results area that shows the evaluated decision and reason

After changes, use the **Save** action to update the policy attached to the agent.

## Runtime Enforcement

At runtime, policies are evaluated against a single input document (`input`).

**Common input concepts:**

- Agent properties (identity, trust score/tier, risk tier)

- Operation context (what kind of action is happening)

- Activity spans (semantic types detected during execution)

- Request/session context used to decide whether an operation should proceed

Your policy should be written defensively:

- Prefer `default result = ...` so the policy always produces a decision

- Avoid assumptions about optional fields being present

## Policy Input Fields

Before diving into examples, here are the key fields available in the policy input document:

| Field | Source | Description |

| ---------------------- | ------------- | ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| `activity_type` | Agent-defined | The type of Temporal activity being evaluated. Your agent defines its own activity types based on how you've structured your workflow. In the demo agent, `agent_toolPlanner` is the activity that calls the LLM and returns a structured tool call. |

| `activity_output.tool` | Agent-defined | The name of the tool the agent is planning to call. Tools are custom functions that your agent registers. For example, `CreateInvoice` or `CurrentPTO`. |

| `activity_output.args` | Agent-defined | The arguments being passed to the tool. These are tool-specific and defined by your agent's tool schema. |

| `event_type` | Platform | The Temporal event type (e.g., `ActivityCompleted`). Provided by the platform. |

| `risk_tier` | Platform | The agent's assessed risk tier (1–4). Assigned in the platform under agent settings. |

| `spans` | Platform | Operation-level classifications attached to activity execution. Each span has a `semantic_type` (e.g., `database_select`, `file_read`, `llm_completion`) that describes what kind of operation occurred. |

## Examples

### Require approval for invoice creation

When every invoice must go through a human reviewer regardless of amount — a common requirement for newly deployed agents or regulated workflows.

Although behavioral rules can also enforce approvals, policies let you define more customized, field-level approval logic.

:::tip Substitute your own names

This example uses `agent_toolPlanner` (the demo agent's activity type for tool-call decisions) and `CreateInvoice` (a custom tool name from the demo's tool registry). Replace these with your own activity type and tool names.

:::

```rego

package openbox

default result := {"decision": "CONTINUE", "reason": ""}

result := {"decision": "REQUIRE_APPROVAL", "reason": "Invoice creation requires human approval before proceeding"} if {

input.activity_type == "agent_toolPlanner"

input.activity_output.tool == "CreateInvoice"

}

```

Test input:

```json

{

"activity_type": "agent_toolPlanner",

"event_type": "ActivityCompleted",

"activity_output": {

"tool": "CreateInvoice",

"next": "tool",

"args": {

"Amount": 1395.71,

"TripDetails": "Qantas flight from Bangkok to Melbourne",

"UserConfirmation": "User confirmed booking"

},

"response": "Let's proceed with creating an invoice for the Qantas flight."

}

}

```

Test output:

```json

{

"result": {

"decision": "REQUIRE_APPROVAL",

"reason": "Invoice creation requires human approval before proceeding"

}

}

```

Runtime result:

`temporalio.exceptions.ApplicationError: ApprovalPending: Approval required for output: Invoice creation requires human approval before proceeding`

Approval visibility in OpenBox platform:

- **Approvals** (main sidebar)

- **Adapt → Approvals** (agent page)

### Require approval for high-value invoices only

When low-value operations can proceed automatically but high-value ones need human sign-off — balancing speed with risk control.

This variant keeps normal invoice creation automatic while routing high-value invoices to human approval. As with the previous example, replace `agent_toolPlanner` and `CreateInvoice` with your own activity type and tool names.

```rego

package openbox

default result := {"decision": "CONTINUE", "reason": ""}

result := {"decision": "REQUIRE_APPROVAL", "reason": "High-value invoice requires human approval before proceeding"} if {

input.activity_type == "agent_toolPlanner"

input.activity_output.tool == "CreateInvoice"

object.get(input.activity_output.args, "Amount", 0) >= 1000

}

```

Test input (approval expected):

```json

{

"activity_type": "agent_toolPlanner",

"event_type": "ActivityCompleted",

"activity_output": {

"tool": "CreateInvoice",

"next": "tool",

"args": {

"Amount": 1395.71,

"TripDetails": "Qantas flight from Bangkok to Melbourne",